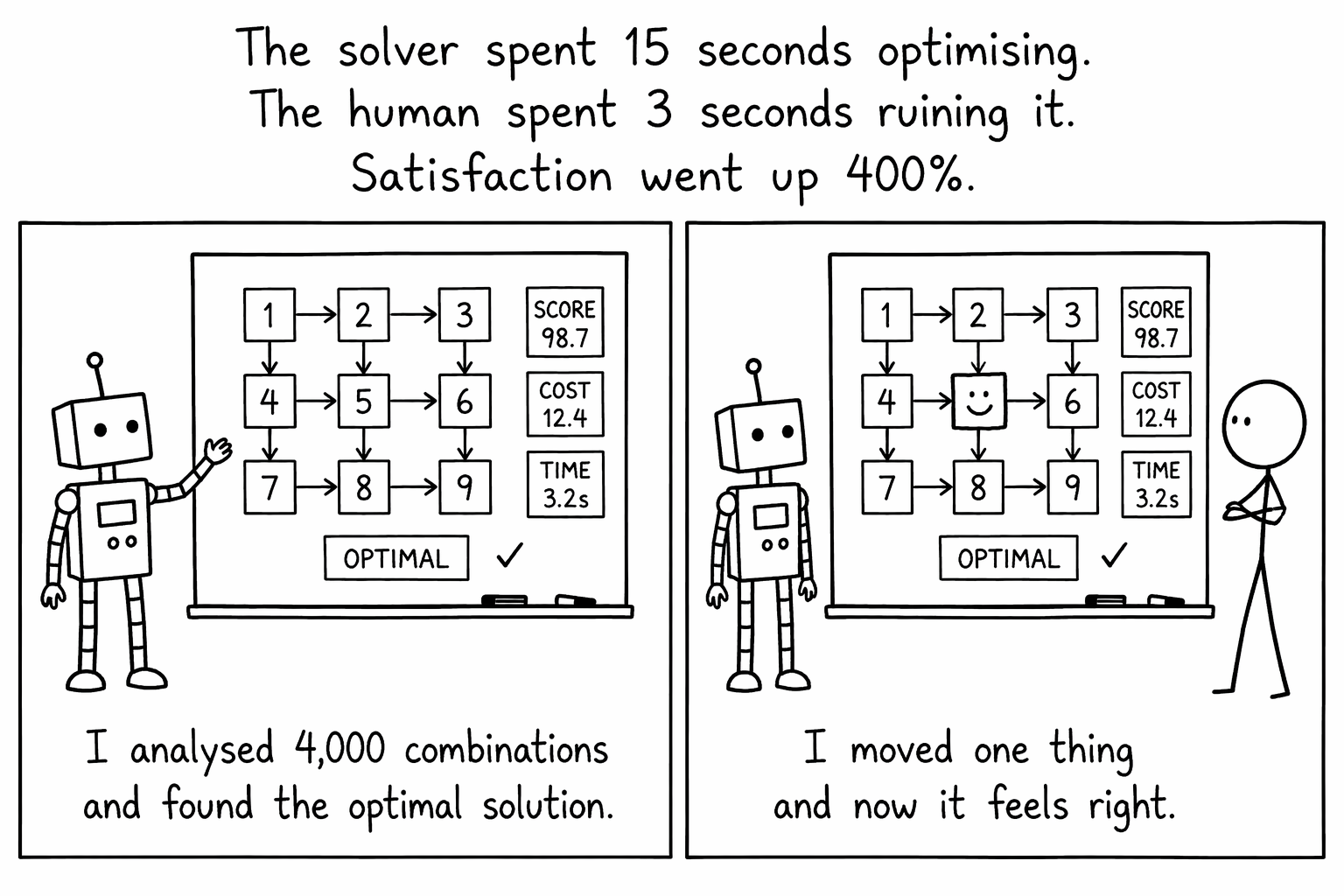

The solver is good. I am allowed to say that because I built it and I have watched it work through thousands of combinations of weather, budget, drive time, and tee time viability to land on an itinerary that genuinely makes sense. It does in fifteen seconds what would take you an evening with a spreadsheet and six browser tabs.

And yet.

The very first thing most people want to do when they see their itinerary is change something.

The Netflix problem

Netflix spent years and millions of dollars building a recommendation engine. It knows what you watched, when you paused, what you rewatched, what you abandoned twelve minutes in. It has more data on your viewing habits than you do. It is, by any objective measure, very good at predicting what you will enjoy.

And what do you do when you open Netflix? You scroll past every recommendation and spend twenty minutes looking for something else. The algorithm is fine. Choosing is just part of the experience. Research on Netflix's own system found that even with highly accurate predictions, users consistently browse beyond their recommendations [1].

Spotify does the same dance. Discover Weekly is brilliant. It surfaces tracks you would never have found on your own. But people still build their own playlists. They drag songs around, delete the ones that don't feel right, add that one track their mate sent them. Studies on recommender systems confirm this: giving users even a small amount of control over the output increases both satisfaction and trust [3]. The algorithm gave them a starting point. They made it theirs.

The IKEA effect, but for itineraries

There is a well-documented quirk of human psychology called the IKEA effect [2]. People value things more when they have had a hand in building them. A wobbly bookshelf you assembled yourself is, in your heart, worth more than a perfect one that arrived fully built. It is objectively worse. You still like it more. You can point at it and say "I did that."

Trip planning works exactly the same way. A solver can produce the optimal itinerary. Mathematically optimal. Weather-adjusted, budget-balanced, drive-time-minimised. Beautiful. But if someone hands you a finished plan and says "here, do this," it feels like homework. If you tweak two things and rearrange a day, suddenly it feels like your plan.

The funny thing is, the tweaks are often small. Swap one course because your uncle played it last year and said the greens were average. Move an afternoon round to the morning because you are not a morning person and want to sleep in on Day 3. Swap in that little nine-hole course near your accommodation because it looked charming on the website.

Small changes. Enormous difference in how the trip feels.

What the solver cannot know

Here is the thing no algorithm can fully account for: you. The solver knows course ratings. It knows green fees, hilliness scores, historical weather patterns, and whether the sun will set before you finish your back nine in July. It is very thorough about facts.

It does not know that your group always books a table at that one restaurant in Queenstown on the first night, so Day 2 needs a late start. It does not know that you have been wanting to play Paraparaumu Beach since you read about it in a magazine five years ago, even though it is slightly out of the way. It does not know that your group has a running joke about always playing the worst-rated course in every region, just to see.

These are not edge cases. They are the entire point of a holiday.

No algorithm can account for the fact that your group always books a table at that one restaurant in Queenstown on the first night.

Google Maps figured this out

Google Maps will give you the fastest route from Auckland to Napier. It accounts for traffic, road works, speed limits. Optimal. And people still drag the route over to go through Taupo because they want to stop for a pie at the bakery near the lake. Google did not resist this. They built the drag handle right into the interface, because they understood that a route is a suggestion, not an instruction.

Same with GPS navigation. It recalculates. It does not lecture you. It does not pop up a window saying "my route was 4 minutes faster." It accepts your decision and works with it. That restraint is the reason people trust it.

So we are building the drag handle

FairwayPlan is getting post-solve editing. The solver does its thing, hands you a full itinerary, and then you can change it. Swap a course. Move a round to a different slot. Drop a round entirely and take a rest day. The solver gave you the 95%. The last 5% is yours.

We are being deliberate about this. When you make a change, the app does not re-solve the entire itinerary and undo your other tweaks. It respects what you chose. It updates the things that need updating, weather data for the new course, drive times, clothing recommendations, and leaves everything else alone.

It is a small thing, technically. A dropdown, a confirmation, a few API calls. But it changes the relationship between the user and the tool. The solver stops being the authority and becomes the assistant. Which is what it should have been all along.

Do 95% of the work. Do it well. Then step back and let the human finish.

The 95/5 rule

I have started thinking about this as the 95/5 rule, and I think it applies to almost every recommendation system. Self-determination theory [4] puts a name to why: autonomy is a basic psychological need. People are more motivated by, and more committed to, outcomes they had a role in shaping.

Do 95% of the work. Do it well. Use the data, run the numbers, optimise the thing. Then step back and let the human do the last 5%. They will probably make it slightly worse by any objective measure. They will also care about it ten times more, because they had a hand in it. And a trip people care about is a trip people actually take.

The best tools in the world are the ones that do the heavy lifting and then hand you the paintbrush for the finishing touches. Nobody wants to prime the walls. Everyone wants to pick the colour.

A trip people have a hand in shaping is a trip people actually take.

So that is what we are building. A solver that does the priming. And a very nice colour chart.

References

- Gomez-Uribe, C. A., & Hunt, N. (2015). The Netflix Recommender System: Algorithms, Business Value, and Innovation. ACM Transactions on Management Information Systems, 6(4), 1–19.

- Norton, M. I., Mochon, D., & Ariely, D. (2012). The IKEA effect: When labor leads to love. Journal of Consumer Psychology, 22(3), 453–460.

- Knijnenburg, B. P., Willemsen, M. C., Gantner, Z., Soncu, H., & Newell, C. (2012). Explaining the user experience of recommender systems. User Modeling and User-Adapted Interaction, 22(4–5), 441–504.

- Ryan, R. M., & Deci, E. L. (2000). Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. American Psychologist, 55(1), 68–78.